Untar linux command example8/31/2023

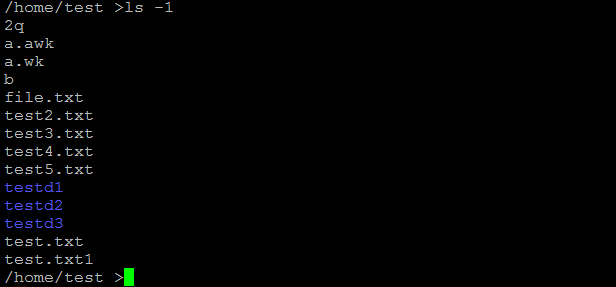

Hope this will help someone (and me next time I google it)Īfter reading all this good answers for different versions and having solved the problem for myself, I think there are very small details that are very important, and rare to GNU/Linux general use, that aren't stressed enough and deserves more than comments. Note the files/folders excluded are relatively to the root of your tar (I have tried full path here relative to / but I can not make that work). Tar -czvf mysite -exclude='file3' -exclude='folder3' So, my command would look like this cd /home/ftp/ Where the c = create, z = zip, and v = verbose (you can see the files as they are entered, usefull to make sure none of the files you exclude are being added). So, you want to make a tar file that contain everyting inside /home/ftp/mysite (to move the site to a new server), but file3 is just junk, and everything in folder3 is also not needed, so we will skip those two. With following file/folders /home/ftp/mysite/file1 If you have the following structure /home/ftp/mysite/ Old question with many answers, but I found that none were quite clear enough for me, so I would like to add my try. Tar -exclude='./folder' -exclude='./upload/folder2' -zcvf /backup/filename.tgz. Full command (cd first, so backup is relative to that directory): cd /folder_to_backup The big gotcha is that the -exclude='./folder' MUST be at the beginning of the tar command. I'm beginning to think the only solution is to create a file with a list of files/folders to be excluded, then use rsync with -exclude-from=file to copy all the files to a tmp directory, and then use tar to archive that directory.Ĭan anybody think of a better/more efficient solution?ĮDIT: Charles Ma's solution works well. I could also use the find command to create a list of files and exclude the ones I don't want to archive and pass the list to tar, but that only works with for a small amount of files. The tar -exclude=PATTERN command matches the given pattern and excludes those files, but I need specific files & folders to be ignored (full file path), otherwise valid files might be excluded. I have a directory that need to be archived with a sub directory that has a number of very large files I do not need to backup. Is there a simple shell command/script that supports excluding certain files/folders from being archived? # strip \0 as it gets dropped with warning otherwiseī="$(_dd $(($offset + $i)) bs=1 count=1 | tr -d '\0' echo. *) fatal "File doesn't look like rpm: $pkg" If you have tried the rpm2cpio.sh script above and it didn't work, you can save the follwing script and invoke like this: rpm2cpio.sh rpmname | cpio -idmv, it workes on my CentOS 7. # Typical usage: rpm2cpio.sh rpmname | cpio -idmvĭECOMPRESSOR="`which unlzma 2>/dev/null`"Įcho "Warning: DECOMPRESSOR not found, assuming 'cat'" 1>&2 # rpm2cpio.sh - extract 'cpio' contents of RPM

I've done a few updates, particularly adding some comments and using "case" instead of stacked "if" statements, and included that fix below #!/bin/sh Simply replacing 'grep -q' with 'grep -q -i' everywhere seems to resolve the issue well. The result of the "COMPRESSION:" check is: COMPRESSION='/dev/stdin: XZ compressed data' The "DECOMPRESSION" test fails on CygWin, one of the most potentiaally useful platforms for it, due to the "grep" check for "xz" being case sensitive. * ) DECOMPRESSOR=`which lzmash 2>/dev/null` # Most versions of file don't support LZMA, therefore we assume O=`expr $o + $sigsize + \( 8 - \( $sigsize \% 8 \) \) \% 8 + 8`ĬOMPRESSION=`($EXTRACTOR |file -) 2>/dev/null`Įlif echo $COMPRESSION |grep -q bzip2 thenĮlif echo $COMPRESSION |grep -iq xz then # xz and XZ safeĮlif echo $COMPRESSION |grep -q cpio then Reposted for posterity … and the next generation. That extracts the payload from a *.rpm package. For those who do not have rpm2cpio, here is the ancient rpm2cpio.sh script

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed